Tests and experiments are the lifeblood of good marketing. This technique liberates you from clichés, common knowledge and following safe paths others go. Instead, you can explore what works for your audience and shape your actions according to data. In other words, you gain power and control. Are you ready for it?

Definition of A/B testing

An A/B test is a randomized controlled experiment: a type of hypothesis testing. You present two variants with just one difference to 2 groups of users to see which version performs better.

For example, you can send the same newsletter with two different subject lines to see which one will be opened more often.

Hey! Win the Zero Moment of Truth! Download free ebook

You can also test more than 2 variants.

5 steps to successful A/B tests: where to start

Perhaps you wonder what to test in the first place. I advocate a slightly different approach, based on the excellent book “A/B Testing. The Most Powerful Way to Turn Clicks into Customers” by Dan Siroker and Pete Koomen, founders of Optimizely. Begin with that 5-step formula:

Define success (and quantifiable success metrics)

What is the core aim of your website, the reason for its existence? Is it to drive leads or to sell products, make it easier to book a visit in the offline salon or to encourage donations? Find that major aim and put it clearly. Then think what could be a suitable metric for the success: the number of leads? Sales?

Identify bottlenecks

OK, now let’s move to the site analysis and find bottlenecks: major obstacles, or ”places when you lose the most momentum in moving users through your desired series of actions.“ For example, you might observe that customers in your e-store have problems with completing the transaction — they add products to the cart but rarely buy. It indicates that check-out is the thing to work on.

Construct a hypothesis

Two previous steps should guide you towards a working hypothesis on what should be improved. It’s science, remember? Take a critical look at your check-out process and decide which element you’d like to test first.

Bear in mind that you should test one thing and one thing only at the time: for example, test only the color of the button, not the copy.

The hypothesis should sound like this:

If I change … from … to …, I will achieve…

E.g.: If I change the color of the button from red to yellow, I’ll achieve higher conversion.

As you see, the hypothesis includes:

* Tested element

* How to alter it

* What results will it bring

Prioritize

You have limited time, so assess what can have a bigger impact on the success. For the beginning, test the essential features or elements.

Test

OK, now you’re ready. Run the test!

What can I test?

Remember that you can experiment with every single element of your emails, website (contact forms, landing pages, recommendation frames) and UI. It includes pricing, feature list, streaming quality, time of page load… Anything that comes to your mind. Test layout, button, copy, colors, time of sending…

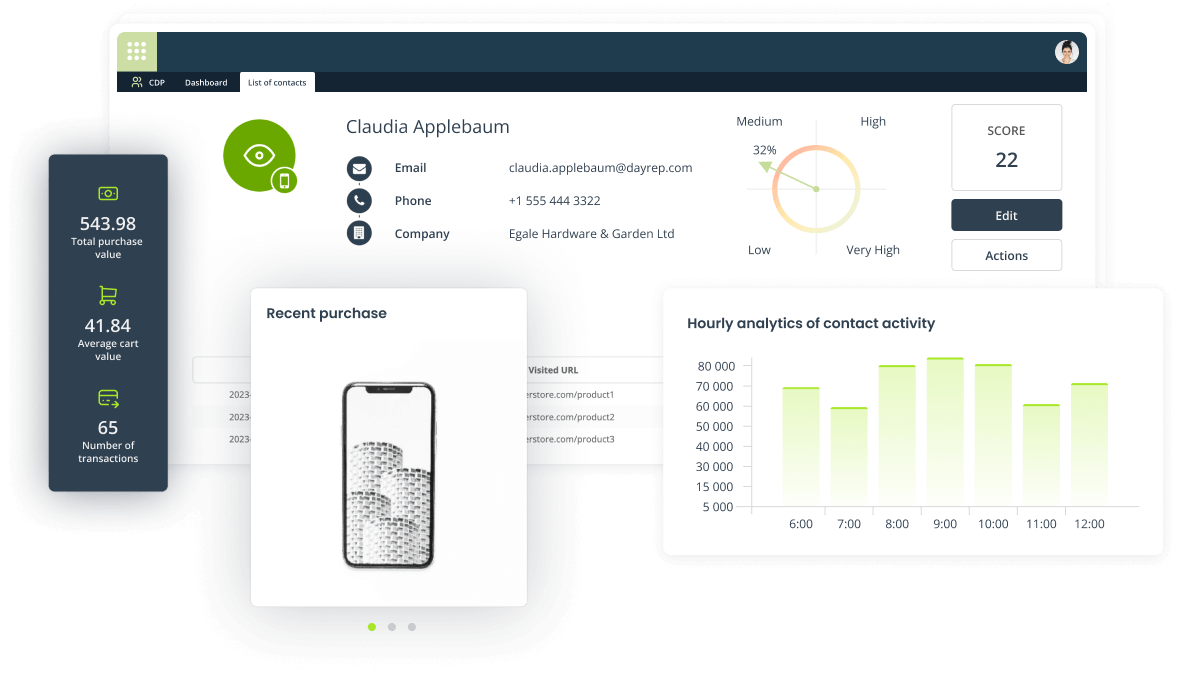

Testing dynamic content with the Marketing Automation platform.

In 2007 Dan Siroker quit his job at Google to join Barack Obama team. He focused on optimizing landing page with email sign-up form. He started from testing the copy on the button; then he checked various videos and photos (contrary to expectations, it turned out that audience responded better to photos, not videos). The winning combination (the image of the candidate with his wife and daughter + ”Change we believe in“ copy + ”Learn more“ button) improved the subscription rate by 40.6 percent.

Netflix on the other hand experiments with everything, including things they know from customers’ feedback, showing a discrepancy between users’ declarations and actions. Many Netflix visitors expressed the opinion that making the titles of platform’s content available to browse was the one thing they wanted to know before signing up. One could say it seemed legit: people want to make sure they’ll find valuable movies before they trade their contact data. But Netflix being Netflix refused just to stop at survey results; they ran tests. The team applied the following hypothesis:

If users can browse content titles before signing up, it will increase the number of users who register.

Then they created 5 variations with varying degrees of content searchability, each allowing visitors to know more before subscribing. The results? Against users’ declarations, the original option delivered the best results.

To sum up

Test everything.

Start from essential elements of your website, related to its raison d’etre, then move to details that add up indirectly to your success. At the same time make sure that you never optimize minor pieces at the expense of the crucial ones (like improving blog readership while lowering sales).

Follow

Follow

![[New Feature] Shine the light on your hidden visitors with Spotlight](https://blog.salesmanago.com/wp-content/uploads/2024/04/1-9.png)

![[New Feature] Unlock Deep Behavioral Personalization with Product Collections](https://blog.salesmanago.com/wp-content/uploads/2024/03/1-3.png)

![[New Feature] Shine the light on your hidden visitors with Spotlight](https://blog.salesmanago.com/wp-content/uploads/2024/04/1-9-1024x536.png)